Will our reliance on technology compromise our relationships with humans and will the benefits be on individual and society level? It depends. Someone who had trouble with romance for many years will be living with robot girlfriend, not human girlfriend. If they are happier in personal relationships, they would perform their role better as citizens. As for other humans, they may not like to compete with robots.

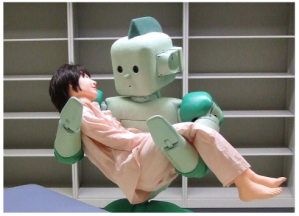

With Paro children are onto something: the elderly are taken with the robots. Most are accepting and there are times when some seem to prefer a robot with simple demands to a person with more complicated ones. Quiet and compliant robots might become rivals for affection. People want love on their own terms… They want to feel that they are enough.

“It is common for people to talk to cars and stereos, household appliances, and kitchen ovens. The robots’ special feature is that they simulate listening, which meets a human vulnerability: people want to be heard. From there it seems a small step to finding ourselves in a place where people take their robots into private spaces to confide in them. In this solitude, people experience new intimacies. The gap between experiences and reality widens. People feel heard but the robots cannot hear.”

Humans don’t want to get hurt, they have a fear of rejection, pain, and the desire for acceptance and belonging. So a relationship with robot that will never leave, betray, reject is logical, but it will alter humans’ behavior in becoming more unwilling to change and compromise.

It could possibly lead to the situation when people will become so intolerant of each other that they will only be able to have companions robots, not humans (because humans are so hard to handle), so there will be even more isolation between humans, as they will live in their only bubble or delusional worlds.

We have more love in ourselves than people can take from us… We want to give love, but there is not always a person to receive it… That is where robots come to play… Yes, we should transfer those surpluses of love to apply them to people. But people want to receive love and care on their own terms. It gives an opportunity to love and to be useful and what we don’t always get in reality – get the same in return… None wants our unconditional love and care on our terms, and we don’t always want love on their terms either – it is too demanding…

Humans need validation that we are right and enough the way we are. Robots don’t cure our flaws, but don’t see them and give us an opportunity for better realities, where we are a hero, or at least good.

We put robots on the terrain on meaning, but they don’t know what we mean. Moral questions come up as robotic companions not only “cure” the loneliness of seniors but assuage the regrets of their families. An older person seems content, a child feels less guilty. As we learn to get the most out of robots, we may lower our expectations of all relationships, including those with people.

Re-posted from The Ultimate Answer Blog